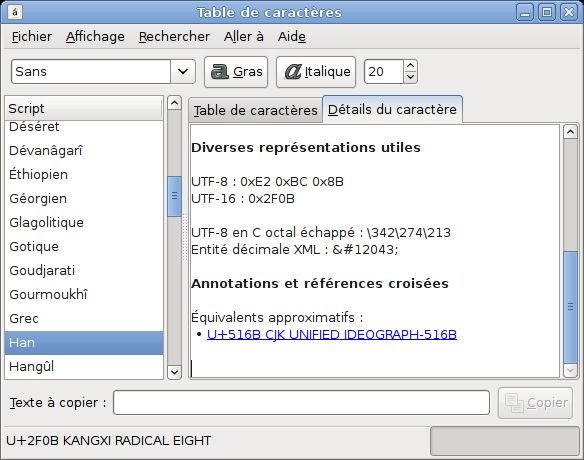

The screen shot shows Japanese, English and Thai text encoded as UTF-8 Unicode. Conversion to the legacy Thai code page would lose the Japanese characters. How to Encode an Excel File to UTF-8 or UTF-16. Encoding your Excel files into a UTF format (UTF-8 or UTF-16).

In motherland Russia we have 4 popular encodings, so your question is in great demand here. Only by char codes of symbols you can not detect encoding, because code pages intersect. Some codepages in different languages have even full intersection.

So, we need another approach. The only way to work with unknown encodings is working with probabilities. So, we do not want to answer the question 'what is encoding of this text?' , we are trying to understand ' what is most likely encoding of this text?' One guy here in popular Russian tech blog invented this approach: Build the probability range of char codes in every encoding you want to support. You can build it using some big texts in your language (e.g. Some fiction, use Shakespeare for english and Tolstoy for russian, lol ).

You will get smth like this: encoding1: 190 = 0.93009, 222 = 0.93009. Encoding2: 239 = 0.93009, 207 = 0.93009. EncodingN: charcode = probabilty Next. You take text in unknown encoding and for every encoding in your 'probability dictionary' you search for frequency of every symbol in unknown-encoded text.

Sum probabilities of symbols. Encoding with bigger rating is likely the winner. Better results for bigger texts. If you are interested, I can gladly help you with this task. We can greatly increase the accuracy by building two-charcodes probabilty list. Mbdetectencoding certanly does not work. Please, take a look of mbdetectencoding source code in 'ext/mbstring/libmbfl/mbfl/mbflident.c'.

There is no way to identify the charset of a string that is completely accurate. There are ways to try to guess the charset. One of these ways, and probably/currently the best in PHP, is mbdetectencoding. This will scan your string and look for occurrences of stuff unique to certain charsets. Depending on your string, there may not be such distinguishable occurrences. Take the ISO-8859-1 charset vs ISO-8859-15 ( ) There's only a handful of different characters, and to make it worse, they're represented by the same bytes. There is no way to detect, being given a string without knowing it's encoding, whether byte 0xA4 is supposed to signify ¤ or € in your string, so there is no way to know it's exact charset.

(Note: you could add a human factor, or an even more advanced scanning technique (e.g. What Oroboros102 suggests), to try to figure out based upon the surrounding context, if the character should be ¤ or €, though this seems like a bridge too far) There are more distinguishable differences between e.g. UTF-8 and ISO-8859-1, so it's still worth trying to figure it out when you're unsure, though you can and should never rely on it being correct.

Interesting read: There are other ways of ensuring the correct charset though. Concerning forms, try to enforce UTF-8 as much as possible (check out snowman to make sure yout submission will be UTF-8 in every browser: ) That being done, at least you're can be sure that every text submitted through your forms is utf8. Concerning uploaded files, try running the unix 'file -i' command on it through e.g. Exec (if possible on your server) to aid the detection (using the document's BOM.) Concerning scraping data, you could read the HTTP headers, that usually specify the charset. When parsing XML files, see if the XML meta-data contain a charset definition. Rather than trying to automagically guess the charset, you should first try to ensure a certain charset yourself where possible, or trying to grab a definition from the source you're getting it from (if applicable) before resorting to detection. The main problem for me is that I don't know what encoding the source of any string is going to be - it could be from a text box (using is only useful if the user is actually submitted the form), or it could be from an uploaded text file, so I really have no control over the input.

I don't think it's a problem. An application knows the source of the input. If it's from a form, use UTF-8 encoding in your case. Just verify the data provided is correctly encoded (validation).

Keep in mind that not all databases support UTF-8 in it's full range. If it's a file you won't save it UTF-8 encoded into the database but in binary form. When you output the file again, use binary output as well, then this is totally transparent. Your idea is nice that a user can tell the encoding, be he/she can tell anyway after downloading the file, as it's binary. So I must admit I don't see a specific issue you raise with your question. But maybe you can add some more details what your problem is.

If you're willing to 'take this to the console', I'd recommend enca. Unlike the rather simplistic mbdetectencoding, it uses 'a mixture of parsing, statistical analysis, guessing and black magic to determine their encodings' (lol - see ).

However, you usually have to pass the language of the input file if you want to detect such country-specific encodings. (However, mbdetectencoding essentially has the same requirement, as the encoding would have to appear 'in the right place' in the list of passed encodings for it to be detectable at all.) enca also came up here. It seems that your question is quite answered, but i have an approach that may simplify you case: I had a similar issue trying to return string data from mysql, even configuring both database and php to return strings formatted to utf-8. The only way i got the error was actually returning them from the database. Finally, sailing through the web i found a really easy way to deal with it: Giving that you can save all those types of string data in your mysql in different formats and collations, what you only need to do is, right at your php connection file, set the collation to utf-8, like this: $connection = new mysqli($server, $user, $pass, $db); $connection-setcharset('utf8'); Wich means that first you save the data in any format or collation and you convert it only at the return to your php file.

Hope it was helpful!

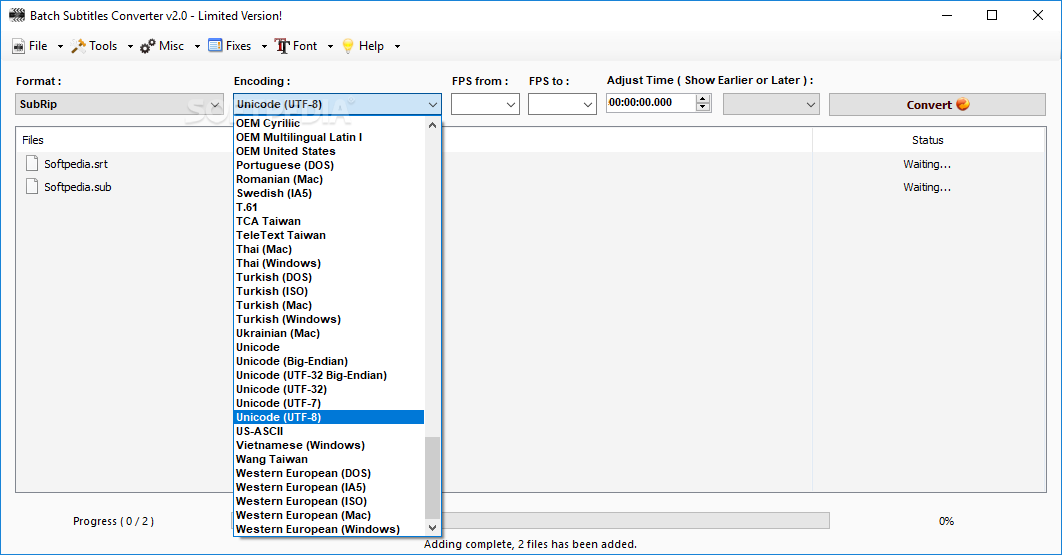

How to convert Thai Character to UTF8? Q: After conversion using, the Thai address is still not visible. The source file is an extraction from AS400 and column AF and column B are in Thai characters.

I try to convert this file containing Thai addresses to UTF8 but unfortunately after conversion the Thai Script is still not visible. Best part is that if users in Thail and try to convert it using Notepad to UTF-8, it works. Please help, Thanks & Regards. A: Please follow below steps 1, Please download and install the demo version from, then run ' Demo for win'. 2, Then select the source file encoding format as '11=Thai(ISO-8859-11)', and select the destination file encoding format as '41=Unicode 8 Bit(utf-8)', and set the source file path(for example set File Filters as '.txt', and set file path as 'e: test'), and click 'Convert' button, final you can get utf-8 encoding files from Thai encoding files.